Agentic AI teams face a practical choice. They can build one capable agent. Or they can orchestrate many specialized agents. Both routes can work. Yet they behave very differently in production. This guide will come in handy: it explains agentic ai single vs multi agent systems with clear, operational detail. It focuses on architecture, tradeoffs, and real examples. It also links to recent reports and papers so you can verify key claims.

Adoption signals also explain why this choice matters now. Gartner predicts 60% of brands will use agentic AI to deliver streamlined one to one interactions by 2028. At the same time, broader AI use keeps rising. Stanford HAI reports 78% of organizations reported using AI in 2024. So, more teams now ship agents into real workflows. That raises the stakes for picking the right system design.

What Is a Single-Agent System in Agentic AI?

A single agent system uses one agent as the main decision maker. It can still use tools, memory, and retrieval. However, it does not split work across multiple independent agents.

Definition. A single agent system is one autonomous workflow controller. It interprets the goal, plans steps, calls tools, and returns outputs. It usually runs in a tight loop: observe, decide, act, and reflect.

How Single-Agent Architecture Works. Most single agent designs follow a predictable path. First, the agent reads the user goal and context. Next, it selects a plan. Then, it calls tools such as search, a database, code execution, or a ticketing system. After that, it checks results and decides the next action.

Single agent systems often include these building blocks:

- Goal and constraints. The agent starts with a clear objective and clear limits.

- Tool layer. The agent can query APIs, run scripts, or trigger workflows.

- Memory. The agent stores short term context and long term facts.

- Retrieval. The agent pulls relevant documents or records when needed.

- Guardrails. The agent checks policy, safety, and formatting before it acts.

- Observability. Logs capture prompts, tool calls, and outputs for debugging.

Design pattern matters. Many teams use a plan then execute loop. Some teams use a react style loop. Others use a task graph inside a single agent, where the agent still owns every decision. This keeps control simple. It also keeps the mental model clear.

Pros. A single agent can feel faster to build and easier to operate. The reasons are practical.

- Lower coordination overhead. One agent means fewer moving parts.

- Simpler debugging. You follow one chain of decisions.

- Clear ownership. One controller decides what happens next.

- Predictable failure modes. Errors usually come from tool calls, missing data, or weak instructions.

- Cheaper orchestration. You avoid extra agent to agent chatter.

Cons. Single agent systems hit limits when the task grows. They can still succeed, but you often pay in reliability or latency.

- Context pressure. One agent must hold many details at once.

- Role conflict. One agent must plan, execute, verify, and explain. That can reduce quality.

- Harder specialization. You can prompt a persona, yet the same model still does everything.

- Weaker parallel work. One agent usually acts in sequence, not in parallel.

- Limited cross checks. You rely on self review, which can miss errors.

Best Use Cases. Single agent systems shine when the workflow is linear and the boundaries are clear.

Common fits include:

- Structured automation. Example: triage support tickets, route them, and draft replies from a knowledge base.

- Internal copilots. Example: answer staff questions from policy documents and past tickets.

- Data lookups with tools. Example: pull a customer record, summarize account history, and suggest next steps.

- Personal productivity agents. Example: schedule tasks, draft content, or generate briefs with a fixed template.

Here is a concrete example. A finance assistant agent handles invoice intake. It reads an invoice, extracts key fields, checks them against purchase orders, and flags mismatches. The agent calls OCR, then calls an ERP API, then drafts a short approval note. One agent works well because the workflow stays consistent. The system also benefits from a strict checklist and strong validation rules.

FURTHER READING: |

1. How to Use AI? A 10-Step Guide for Effective and Ethical Use |

2. How Does AI Affect Our Daily Lives? - Top 10 Goods & Bads |

3. Will AI Replace Programmers? Friend or Foe? |

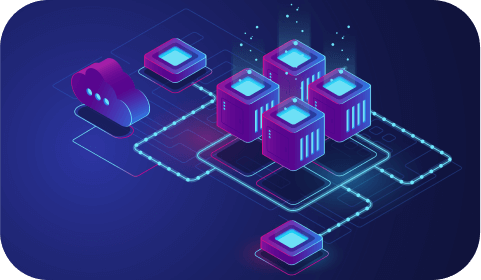

What Is a Multi-Agent System in Agentic AI?

A multi agent system is different from agentic AI single system in that it splits work across multiple agents. Each agent has a role, a narrow tool set, and a narrow responsibility. A coordinator then routes tasks and merges results.

Definition. A multi agent system is a coordinated group of agents that collaborate to complete a goal. Each agent acts semi independently. Yet the system still enforces shared rules, shared state, and shared success criteria.

How Multi-Agent Architecture Works. A typical multi agent workflow starts with a router or manager. That manager reads the goal and breaks it into sub tasks. Then, it assigns each sub task to a specialist agent. After that, the system collects outputs, resolves conflicts, and produces a final answer or action plan.

Multi agent designs often use these patterns:

- Manager and workers. A manager plans and delegates. Worker agents execute focused steps.

- Committee or debate. Several agents propose answers. Another agent judges and selects.

- Pipeline specialization. One agent researches, another drafts, another verifies, and another formats.

- Blackboard. Agents write partial results to shared state. Others build on it.

- Tool isolation. Each agent gets only the tools it needs, which reduces risk.

Frameworks make this easier. For example, AutoGen enables multi agent conversations to accomplish tasks. In practice, teams define roles such as planner, coder, reviewer, and executor. They also define interaction rules such as turn limits and stopping conditions. Similarly, AutoGen is a framework for creating multi agent AI applications that can act autonomously or work alongside humans. These resources show how teams implement orchestration without hand building every loop.

Multi agent systems also benefit from recent evaluation work. One study reports 87.4% on GPQA Diamond using multi turn multi agent orchestration in its benchmark setup. That result does not mean multi agent always wins. However, it supports a key idea. Orchestration can match strong single model performance when you design the interaction well.

Pros. Multi agent systems pay off when the problem needs specialization, verification, or parallel work.

- Specialized reasoning. Each agent can focus on one domain or one step.

- Better internal checks. One agent can challenge another, which reduces blind spots.

- Parallel execution. Agents can research, draft, and validate at the same time.

- Safer tool access. You can restrict sensitive tools to a single agent.

- Modular scaling. You can add or swap agents without rewriting the whole system.

Cons. Multi agent systems cost more to design and operate. The pain often shows up after the first demo.

- Coordination complexity. Agents can loop, disagree, or duplicate work.

- Higher latency. Extra turns can slow down responses.

- Higher cost. More agent calls usually means higher compute spend.

- State management risk. Shared memory can drift or get polluted.

- Harder evaluation. Many paths can lead to an answer, which complicates testing.

Best Use Cases. Multi agent systems fit tasks that look like a small team effort.

Common fits include:

- Complex research and synthesis. Example: one agent gathers sources, another extracts claims, another writes, another fact checks.

- Software engineering workflows. Example: a planner writes tasks, a coder writes changes, a tester runs checks, a reviewer verifies style.

- Security and operations. Example: an incident triage agent gathers signals, a containment agent proposes actions, an approval agent checks policy.

- Procurement and vendor analysis. Example: one agent compares offers, another checks legal terms, another models total cost, another drafts a recommendation.

Consumer trust also affects multi agent success. If your system talks directly to customers, you need careful orchestration and clear escalation. Adobe reports it surveyed 3,000 executives and practitioners and 4,000 customers globally in its latest research program. That kind of work highlights a simple reality. Customers judge agent quality by consistency and clarity, not by how many agents you run behind the scenes.

Infrastructure readiness also matters. Cisco and Omdia report 80% of executives believe agentic AI will be essential for survival by 2027. Multi agent systems put more stress on networks, identity controls, and observability. So, orchestration choices must match your platform maturity.

Key Differences Between Agentic AI Single and Multi-Agent Systems

The key difference between agentic AI single and multi-agent systems is not just agent count. The real difference is how the system manages complexity. Single agent systems centralize reasoning. Multi agent systems distribute reasoning and then reconcile it.

Use the table below as a fast decision aid. Then, read the notes after it for deeper context.

| Dimension | Single-Agent System | Multi-Agent System |

|---|---|---|

| Control model | One controller plans and acts end to end | Coordinator delegates and merges specialist outputs |

| System complexity | Lower moving parts and simpler state | More components and stricter state discipline |

| Specialization | One agent must juggle roles | Each agent can focus on one role or domain |

| Verification | Mostly self review inside one loop | Peer review through critique, voting, or separate validators |

| Speed | Fewer turns, often faster for simple tasks | More turns, can be slower unless parallelized well |

| Cost profile | Cheaper orchestration, fewer calls | Higher orchestration cost, more calls and retries |

| Failure modes | Wrong plan, tool misuse, missing context | Coordination loops, conflict, duplicated work, state drift |

| Security design | One agent often needs broad tool access | Tool isolation by role supports least privilege |

| Testing and evaluation | Deterministic tests are easier to set up | More paths require scenario based testing and robust telemetry |

| Best fit | Clear workflows with stable steps | Open ended workflows that need expertise and cross checks |

Control and ownership drive many downstream effects. With a single agent, one prompt and one policy set often control the system. Therefore, changes feel simpler. With multi agent systems, each role needs its own prompt, tools, and limits. So, you must treat roles like services.

Verification also changes the game. A single agent can reflect and revise. However, self review can miss the same blind spot twice. In contrast, a multi agent review step can challenge assumptions. It can also catch tool errors, missing evidence, or inconsistent tone.

Security and governance often push teams toward multiple agents. A single agent that both reads sensitive data and triggers destructive actions creates risk. In a multi agent setup, you can separate duties. One agent can read logs. Another can propose actions. A third can request approval. This mirrors real operational controls.

Cost and latency still matter. Multi agent systems can waste cycles if you let agents debate endlessly. So, you need hard stop rules. You also need crisp handoffs. If you do this well, you can keep a multi agent system efficient. If you do it poorly, it will feel slow and expensive.

Investment trends also explain why teams now revisit this choice. Stanford HAI reports Private investment in generative AI reached $33.9 billion. That scale of funding pushes tools and platforms forward fast. As a result, multi agent orchestration is becoming more accessible. Yet the core tradeoff stays the same. More structure can improve quality, but only if you manage complexity.

When Should You Choose Single-Agent vs Multi-Agent?

1. Choose Single-Agent If

Your workflow has a clear path

Choose a single agent when steps stay stable. This includes workflows with a fixed order, a fixed template, and a clear end state. The agent can follow a checklist and finish reliably.

You need fast iteration

Single agent systems let you ship sooner. You can improve prompt clarity, add one tool, and see results quickly. With them, you also keep the system easier to monitor.

You want simple operations

One agent produces one main trace. That helps your team debug quickly. It also helps you build a clear evaluation set.

Your risk surface is small

Some use cases do not require strict separation of duties. For example, an internal summarizer agent that cannot take actions has a smaller blast radius. In that case, one agent often suffices.

Practical examples

- Customer support draft replies. The agent reads a ticket, searches a knowledge base, and drafts a response.

- Sales call summaries. The agent summarizes a transcript and updates a CRM note via one safe API.

- Content brief generation. The agent creates a structured brief from a keyword list and brand rules.

For these tasks, a single agent can still use strong guardrails. It can validate outputs, cite sources and request human review when confidence is low. So, you do not lose safety by default. You just keep the architecture simpler.

2. Choose Multi-Agent If

Your task requires multiple kinds of thinking

Multi agent systems help when the task mixes research, reasoning, verification, and execution. Each role can focus. Therefore, quality often improves.

You need strong internal checks

Choose multi agent when mistakes are costly. A reviewer agent can challenge a planner agent. A policy agent can block risky actions. This setup reduces single point failures.

You must separate tools and permissions

Multi agent designs support least privilege. You can isolate sensitive tools and enforce approval gates before actions run.

You want parallel work

Some goals benefit from parallel progress. One agent can search docs. Another can query metrics. Another can draft the final output. Then, a coordinator merges results. This feels like a real team workflow.

Practical examples

- Software delivery assistant. Planner, coder, tester, and reviewer roles collaborate to propose a patch and validate it.

- Security triage. Signal collection, hypothesis, containment suggestion, and approval roles reduce risk.

- Enterprise procurement analysis. Research, legal review, financial modeling, and executive summary roles produce a stronger recommendation.

Multi agent does not guarantee success. You still need disciplined orchestration. Set clear roles. Limit turn counts. Enforce shared definitions. Then, measure outcomes with tests and telemetry. When you do this, multi agent systems can scale beyond what one agent can manage.

Multi agent also fits better when humans must supervise. You can insert human approval at the right place. You can also route uncertain cases to a human. This makes the system safer and more trustworthy.

Choice depends on your constraints, not hype. Start with the simplest system that can meet your reliability target. Then, add agents only when you can explain why each new role reduces risk or increases quality.

Single agent remains a strong default for many teams. Yet multi agent becomes compelling when tasks look like team work. The best strategy often blends both. One top level agent can route tasks. Then, it can call a small multi agent team only when complexity rises.

That hybrid mindset also matches how real organizations operate. Simple requests follow a standard playbook. Complex requests trigger escalation, review, and specialized expertise. Agentic AI systems work best when they follow the same logic.

Conclusion

Smart agent design starts with the same rule every team follows. Match your architecture to your real workflow. Choose one agent when the path stays clear. Choose multiple agents when you need specialization, parallel work, and stronger internal checks.

We help teams turn that decision into a system that ships. Designveloper was founded in early 2013, and we bring 12 years of delivery experience into every agent build. We have logged 500,000+ working hours while building digital products that must stay reliable in real use. That includes Lumin and Swell & Switchboard, plus many other platforms where performance, security, and UX all matter.

When you want to implement agentic AI, we can cover the full build. We design the workflow, tools, and guardrails, then we develop the product through web application development services and mobile app development services. We also deliver production grade intelligence through AI development services, and we harden deployments with Cyber Security Consultant Services. Bring us your use case, and we will help you pick the right agent setup and launch it with confidence.

Read more topics

You may also like