AI Agent Vs LLM: How They Differ And Why Businesses Need Both

As AI adoption increases, many teams wonder: should they use a large language model (LLM), an AI agent, or both? The answer shapes architecture, cost, governance, and how much human oversight teams need. However, many often mix up these technologies, making it hard to choose the right option for their existing problems.

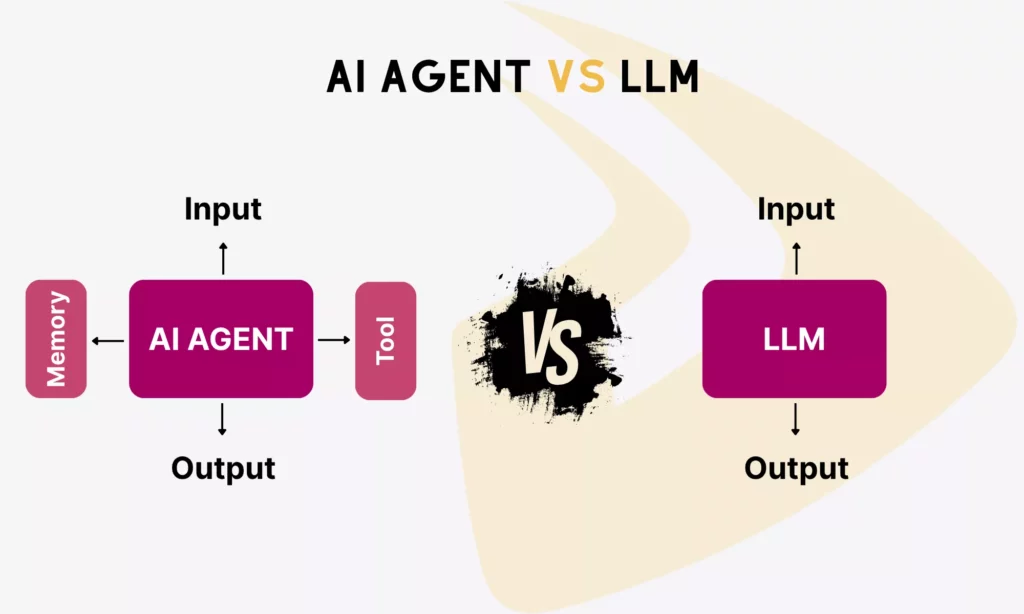

The confusion is understandable. LLMs and AI agents are deeply related. Anthropic simply defined that agents are LLMs using tools in a loop. But at their core, they still solve different problems.

This article compares the key differences between an AI agent vs LLM, covering how each technology works, where each one fits, and how they complement each other. It also includes a decision framework with real-world scenarios for teams to choose from.

What Is An LLM?

A large language model (LLM) is an AI system trained on massive text datasets to understand and generate human language. It does not have the ability to take autonomous action or interact with external systems on its own.

According to AWS, LLMs are technically built on transformer architectures (neural networks) designed to process and generate sequences of text. During training, they learn statistical patterns across languages. When users submit a prompt, the model predicts which words come next and produces a human-like response.

Some typical LLMs include OpenAI’s GPT series, Google Gemini, Anthropic Claude, and Meta’s Llama. These models power AI tools that people use every day (like ChatGPT, Google Gemini, and Claude) to write content, summarize documents, generate code, translate between languages, explain complex concepts, and even generate images.

In short: LLMs provide the intelligence layer that powers AI applications. But in their base form, they cannot take actions autonomously, maintain context across sessions, or interact with external systems without additional architecture.

What Is An AI Agent?

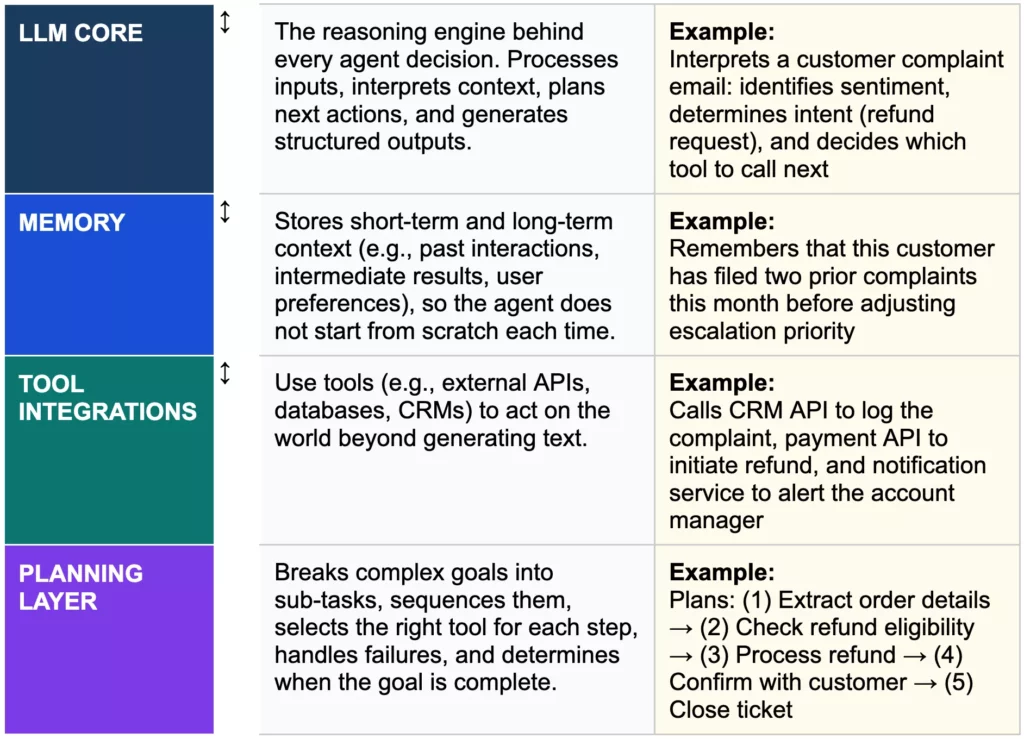

An AI agent is a software system that uses an LLM as its reasoning core and adds memory, tool access, and planning to execute multi-step goals autonomously, as defined by Google Cloud.

In comparison, an LLM waits for instructions and produces a response. But an AI agent operates with a sense of direction. Given an objective, an agent figures out the required steps, adjusts along the way, and continues until the task is complete without requiring a prompt or human intervention for each action.

AI agents are often called LLM agents because they are built around an LLM as the core reasoning engine. The agent architecture layers four additional capabilities on top:

AI Agent Vs LLM: The Key Differences At A Glance

The clearest way to set them apart: LLMs generate language, while AI agents execute goals. The table below shows the key differences across the dimensions that help teams make informed deployment decisions:

| Differences | LLM (Large Language Model) | AI Agent |

|---|---|---|

| Primary purpose | Understand and generate language | Execute tasks and achieve goals autonomously |

| Goal-setting | Relies on user prompts for every action | Pursues a defined goal across multiple steps independently |

| Autonomy | Reactive (waits for input each time) | Semi- or fully autonomous (plans and acts without step-by-step prompting) |

| Action | Generates text and multimodal responses only | Executes tasks via APIs, databases, and external system integrations |

| Tool use | Limited (via function calling in structured setups) | Actively selects and calls the right tools in the right sequence |

| State management | No memory between sessions by default | Maintains memory and context across steps and sessions |

| Workflow complexity | Handles single-step or loosely connected tasks | Manages multi-step, multi-system workflows end to end |

| Decision-making | Pattern-based language prediction | Iterative planning, reasoning, and course-correction |

| Adaptability | Limited to the scope of a single prompt | Adapts based on outcomes, failures, and changing conditions |

| Human oversight | Output must be reviewed before any action is taken | Can operate with minimal intervention for well-governed tasks |

| Best when | Language output is the final deliverable with no action required | The goal requires multi-step execution across systems with minimal human input |

Autonomy And Goal-Setting

LLMs are fundamentally prompt-driven. Each prompt is a complete, isolated instruction. Besides, the quality of the output depends entirely on the precision of that input.

AI agents approach tasks differently. Given a high-level objective, they break it into steps, execute each one, and iterate on the results without requiring a new prompt for each action.

While an LLM requires a human to drive every step, an agent drives the workflow itself.

Action And Tool Use

LLMs generate language. They can explain how to do something, but they do not do it. Through function calling, LLMs can produce structured outputs like JSON that trigger external processes. However, the integration logic still sits outside the model.

AI agents natively select and call the right tools in the right sequence. For example, they pull sales data, update dashboards, send emails, and trigger alerts without a prompt per action.

State Management

Raw LLMs are stateless. Each interaction starts fresh unless context is manually included in the prompt, which is why long conversations can lose track of earlier details. For single-step tasks, statelessness is not a problem. But for anything that spans sessions or systems, it becomes a hard ceiling.

AI agents introduce structured memory. Accordingly, they store past actions, recall previous results, and use accumulated context to guide future decisions. This makes them suitable for ongoing workflows like customer onboarding, invoice processing, or sales pipeline management.

Workflow Complexity

LLMs handle single-step or loosely connected tasks well, like summarizing a document, writing a code snippet, or rewriting a paragraph. When tasks require multiple steps, integration with external tools, or autonomous decisions across systems, standalone LLMs reach their limit.

AI agents handle these complex scenarios better. Particularly, they take a high-level goal, decompose it into steps, call the appropriate tools in sequence, and report results without human intervention between steps.

Real Examples: LLM Vs AI Agent In Action

Understanding the technical differences is one thing. Seeing them applied to real workflows makes the distinction between LLMs vs AI agents concrete:

Drafting an Email: LLM

Suppose a user opens an LLM-powered tool (like ChatGPT) and asks it to draft an email. The model then analyzes the prompt, draws on training data to predict what the email should look like, and generates a polished response in one pass.

If the user needs changes, they refine the prompt and the model responds again. In this case, the model does not access external systems, update records, or take further actions beyond text generation.

But if the user sets up ChatGPT to give it memory or tool access, it can shift toward agentic behavior. More particularly, it pulls context from previous conversations, adjusts tone based on past preferences, or integrates with tools to send the message directly.

In both cases, the underlying model is the same; yet the architecture around it changes what it can do.

Booking a Flight: AI Agent

LLMs alone can compare flight options, suggest destinations, or draft a travel plan. But they cannot complete a booking.

Given a prompt like ‘Book the cheapest flight to Singapore on July 25, 2026,’ an AI agent searches flight APIs, filters results by price and timing, checks availability, and proceeds with the booking once conditions are met. If prices change mid-task, the agent adjusts its strategy and retries without waiting for a new instruction

Content, Research, and Knowledge Work: Both

For pure content and research work (e.g., drafting articles, brainstorming ideas, or summarizing reports), LLMs are the primary tool. The task begins and ends with language.

When the requirement extends beyond content creation to automation (optimizing, reformatting, and scheduling content across platforms without manual steps), AI agents become the right choice. The LLM handles interpretation and generation while the agent handles distribution and sequencing.

Workflow Automation Across Business Systems: AI Agent

LLMs can analyze text or suggest next steps within a workflow, but they do not connect systems or execute actions across them. In this case, businesses need AI agents for workflow automation across many systems.

An agent monitors inputs like emails or form submissions, triggers actions across tools (CRM updates, notifications, approvals), and coordinates multiple steps in sequence.

When Should You Use An LLM, An AI Agent, Or Both?

As AI adoption deepens across industries, the real question now is: “When should I use which one?” The decision matrix below maps common business scenarios to the right choice, with reasons and real-world examples:

| Scenario | Recommended | Why | Real-world Example |

|---|---|---|---|

| Language-only task: drafting, summarizing, translating, explaining, classifying | LLM only | Language is both input and final output. No external action or system integration required. | Marketing team generating weekly social copy or summarizing analyst reports |

| Single-step task: one prompt in, one response out, interaction complete | LLM only | Statelessness is not a problem. Agent architecture adds cost and complexity with no additional value. | Developer asking an LLM to explain a function, generate a regex, or rewrite a paragraph |

| High-volume, cost-sensitive, language-only tasks | LLM only | Agents make 5–20× more LLM calls per task due to planning loops — adding cost for no benefit on simple tasks. | FAQ answering at scale where no record update or system action is required |

| Multi-step workflow requiring actions across systems | AI Agent | Requires tool orchestration, sequencing, and state management that LLMs alone cannot provide. | Processing an insurance claim: read → extract → validate → update CRM → notify team |

| Real-time decision-making based on live or changing data | AI Agent | LLMs generate static responses. Agents observe, reason, and act on signals as they change. | Fraud detection scanning transactions and blocking anomalies in real-time without human queuing |

| Complex, unstructured input + action execution required | Both (hybrid) | LLM interprets unstructured language; agent executes across systems based on that interpretation. | Reading a customer complaint email, extracting intent, updating CRM, and sending a follow-up |

| Dynamic workflows with error recovery and self-correction | Both (hybrid) | LLM reasons about failures; agent reroutes or retries with minimal human intervention. | Multi-step onboarding flow that handles missing data, API failures, or approval delays autonomously |

| High-governance / compliance-sensitive workflows | LLM + human review (or supervised agent) | Gartner stated that 40%+ of agentic AI projects will be canceled by 2027 due to poor risk controls. Governance must precede deployment. | Financial reporting or legal document review requiring audit trails and approval checkpoints |

Use An LLM When

LLMs are the right choice when the task begins and ends with language and it requires no external systems, multi-step decisions, or action execution. This applies to writing, summarizing, translating, explaining, classifying, and answering questions where a well-formed response is the complete deliverable. It also applies to high-volume, cost-sensitive tasks where using agent architecture adds cost without adding value.

Use An AI Agent When

An AI agent becomes necessary when the task moves beyond generating language into executing a goal. This applies when workflows require end-to-end automation across systems, when tools must be called in a specific sequence, or when decisions must be made in real-time based on live data.

Use Both When

Most production AI systems use LLMs and agents together. The LLM provides the cognitive layer to understand context, interpret language, and reason through problems. Meanwhile, the agent provides the operational layer of planning, executing, and adapting across systems.

This combined approach is most valuable when tasks involve both unstructured language inputs and multi-step system actions. It also works when workflows must self-correct around failures, API errors, or changing conditions without human intervention.

Why Combining LLMs and AI Agents Delivers Better Outcomes

As discussed, many organizations are moving toward combining LLMs and AI agents rather than choosing between them. The reason is simple: each technology has clear limitations when used in isolation.

An LLM without an agent is a powerful reasoning system that can analyze, generate insights, and understand context. But it cannot execute actions or interact with external systems. Conversely, an agent without a capable LLM can automate tasks. But it lacks the ability to interpret intent, reason through complexity, or adapt to nuanced inputs.

When combined, these limitations disappear. LLMs act as the reasoning layer, providing language understanding and decision support, while AI agents serve as the execution layer, coordinating workflows and taking action across systems. Together, they enable end-to-end automation, from understanding a request to actually completing it.

This shift is part of a broader change in how software is being designed. As highlighted by Gartner, LLMs are emerging as a new “operating system” layer for AI, while agents function more like applications that execute tasks on top of that intelligence. In this model, traditional software does not disappear. Instead, it moves into the background as infrastructure that agents operate on behalf of users.

That architectural shift explains why combining both is not just a design choice, but a practical necessity. Users are no longer interacting directly with software interfaces; instead, they delegate goals to systems that can both understand and act.

Ultimately, the advantage does not come from using more advanced models, but from structuring systems correctly. LLMs think. Agents act. The real value emerges when both are designed to work together as a single operational layer.

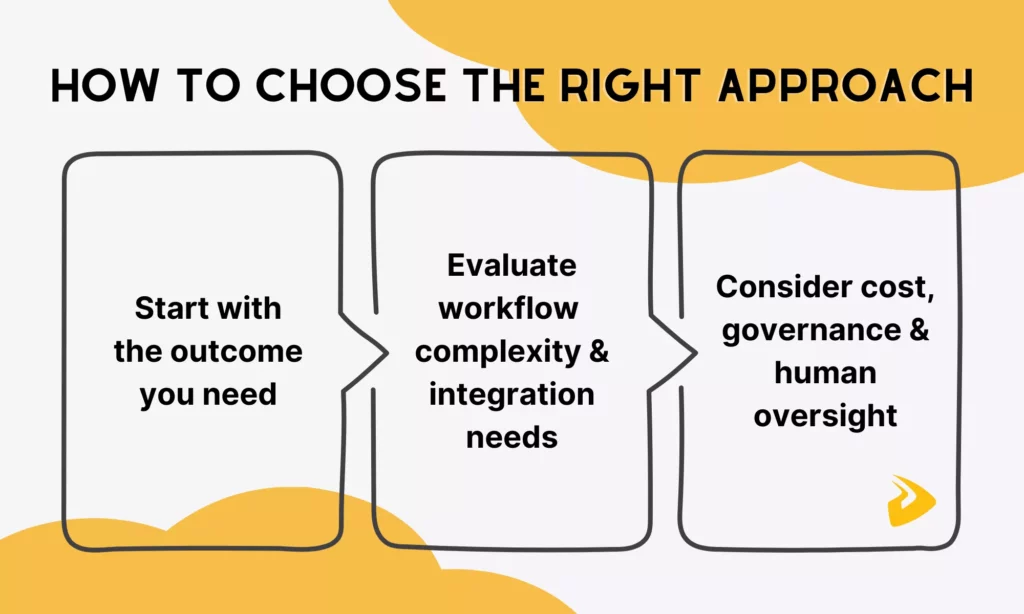

How To Choose The Right Approach For Your Business

Once the distinction between LLMs and agents is clear, it’s time to learn how to choose the right approach for your business:

Start With The Outcome You Need

First, do not rush to choose AI technology. Instead, ask: “What does success actually look like for this problem?”

If the desired outcome is a piece of content, an insight, a summary, a recommendation, or any other form of structured language output, an LLM is likely sufficient.

But if the desired outcome is a completed action (e.g., an updated record or a resolved ticket), an AI agent is a better option.

In case the outcome involves both understanding unstructured input and acting on it across systems, a combined approach is necessary.

Choosing between these patterns depends on task breadth, external dependencies, latency budgets, and governance requirements. The technology choice follows from the outcome definition, not the other way around.

Evaluate Workflow Complexity And Integration Needs

Once the outcome is clear, the second question is whether the workflow involves multiple steps or integrations with external tools.

LLMs shine for single-step tasks with no integration requirements. Meanwhile, tasks that call even one external API or require multi-step sequencing benefit from agentic architecture.

The tipping point is not the complexity of the language task. Instead, it depends on whether the system needs to act on the world, not just respond to it.

Consider Cost, Governance, And Human Oversight

Capability alone does not determine the right approach. One research found that agents make significantly more LLM calls than CoT (Chain-of-Thought) prompting due to iterative planning, tool selection, result evaluation, and error recovery. For language-only tasks, that overhead adds cost without adding value.

Governance is equally important. Teams without robust governance frameworks should not deploy full agent architectures, especially for high-stakes tasks. Human oversight remains important for both technologies in workflows that touch revenue, compliance, or sensitive data.

How Designveloper Builds AI Workflows That Fit Your Real Business Needs

Selecting the right technology (LLM, agent, or hybrid) is only the starting point. The harder challenge here is how to connect that technology to real business workflows: existing systems, team processes, data structures, and governance requirements. This is when many businesses are looking for a reliable, experienced partner to integrate AI into their business workflows.

Designveloper is a Vietnam-based AI-first software development and automation company. Rather than delivering generic AI integrations, our approach starts with real workflow problems. We map where language understanding is needed, where autonomous execution adds value, and where human oversight must remain in the loop before building the technology layer.

That approach helps us deliver successful projects across different industries, including:

- Lumin: Provides in-document chatbots, summarization, agreement generation, translation, and smart redaction.

- Lodg: Supports structured back-office tasks for finance and accounting teams. It helps teams with invoice extraction, autofill, transaction categorization, and tax-related classification.

- HRM: An internal assistant on top of Mattermost. It uses an agentic architecture and integrates directly with internal systems to handle tasks (such as leave requests, approvals, booking, and policy lookups).

For teams evaluating whether to integrate AI capabilities into existing processes or build workflow automation to streamline high-volume, repetitive tasks, Designveloper helps translate AI capabilities into production-ready workflows. Talk to our team now!

FAQs About AI Agent Vs LLM

Is ChatGPT An LLM Or An AI Agent?

ChatGPT is primarily an LLM-based app. It’s a conversational interface built on top of OpenAI’s GPT models to receive a prompt and generate a response. When extended with capabilities like web browsing, memory, or tool access, ChatGPT exhibits agentic behavior. In those configurations, it is better described as an assistive agent rather than a fully autonomous AI agent, because in most setups, it still waits for the next user prompt rather than pursuing a goal independently.

Do AI Agents Require LLMs?

Not always. But in practice, most modern AI agents are built around one.

Historically, agents were rule-based systems that followed fixed decision trees without any language understanding. They could execute tasks, but could not interpret ambiguous input, reason through novel situations, or communicate naturally with users. LLMs equip modern AI agents with those capabilities. That’s why modern AI agents are often referred to as LLM agents.

Can An LLM Become An AI Agent?

Yes. And this is exactly how most AI agents are built today. An LLM on its own is a powerful reasoning and language engine, but it has no mechanism to act on. By integrating an LLM with external tools, memory, and planning capabilities, it becomes an AI agent that can execute actions autonomously.

What Is The Difference Between An AI Agent And A Background Job Calling An LLM API?

The key difference: an LLM API executes a script, while an AI agent runs a reasoning loop.

A background job is often an LLM wrapper. When it calls an LLM API, it’s using the LLM as a text expert to handle static logic within a predefined workflow. Accordingly, it runs on a fixed schedule or trigger, sends a predefined prompt, receives a response, and passes it somewhere else. The background job doesn’t adapt based on what the LLM returns but simply moves data from one place to another.

An AI agent uses the LLM as a reasoning engine to decide which tool to use and when to call it. It also autonomously breaks complex goals into sub-tasks, executes them, self-corrects failures, and adjusts its strategies.

Related Articles